Agenta vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

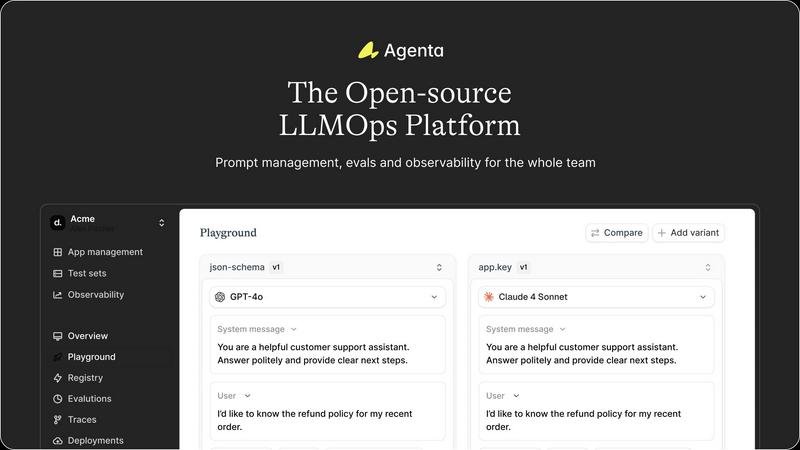

Agenta empowers teams to build reliable AI apps together with integrated LLMOps tools.

Last updated: March 1, 2026

Stop guessing and find the perfect AI model for your task by instantly benchmarking over 100 options for cost, speed, and quality.

Last updated: March 26, 2026

Visual Comparison

Agenta

OpenMark AI

Feature Comparison

Agenta

Unified Playground & Versioning

Agenta provides a centralized playground where your team can iterate on prompts and compare different models side-by-side in real-time. Every change is automatically versioned, creating a complete history of your experiments. This model-agnostic approach prevents vendor lock-in and ensures you can always use the best model for the task. Found an error in production? You can instantly save it to a test set and debug it directly within the playground, closing the feedback loop rapidly.

Systematic Evaluation Framework

Replace guesswork with evidence using Agenta's powerful evaluation system. Create a systematic process to run experiments, track results, and validate every single change before deployment. The platform supports any evaluator you need, including LLM-as-a-judge, custom code, or built-in metrics. Crucially, you can evaluate the full trace of an agent's reasoning, not just the final output, and seamlessly integrate human feedback from domain experts into your evaluation workflow.

Production Observability & Debugging

Gain complete visibility into your AI systems with comprehensive observability. Agenta traces every request, allowing you to pinpoint exact failure points when things go wrong. You and your team can annotate these traces collaboratively or gather direct feedback from end-users. With a single click, turn any problematic trace into a test case. Live, online evaluations continuously monitor performance and proactively detect regressions, ensuring your application remains reliable.

Structured Team Collaboration

Break down silos and bring product managers, domain experts, and developers into one unified workflow. Agenta provides a safe, intuitive UI for non-technical experts to edit prompts and run experiments without touching code. Everyone can participate in running evaluations and comparing results, fostering data-driven decisions. The platform offers full parity between its API and UI, ensuring seamless integration of programmatic and manual workflows into a single hub of truth.

OpenMark AI

Plain Language Task Description

Ditch complex configurations and scripting. With OpenMark AI, you simply describe the task you need to benchmark in everyday language. The platform intelligently interprets your goal, whether it's "extract dates and names from customer emails" or "generate three creative taglines for a new product." This intuitive approach puts the focus on your objective, not on engineering a test harness, making advanced benchmarking accessible to everyone on your team.

Multi-Model Comparison in One Session

Break free from running isolated, manual tests. This core feature allows you to execute the same set of prompts against dozens of leading AI models simultaneously within a single benchmarking session. You get an immediate, side-by-side results dashboard that contrasts performance across all contenders, saving you immense time and providing a clear, comparative view that isolated tests can never offer.

Real-World Performance Metrics

Go beyond theoretical benchmarks. OpenMark AI makes real API calls to each model, providing metrics that matter for production: actual cost per request, true latency, and scored output quality for your specific task. Most importantly, it runs multiple repetitions to show stability and variance, revealing if a model is consistently good or just occasionally lucky. This is the data you need to trust a model before it goes live.

Hosted Credits (No API Key Management)

Simplify your workflow dramatically. Instead of managing and securing a multitude of API keys from different providers like OpenAI, Anthropic, and Google, you simply use OpenMark AI credits. The platform handles all the backend connections, allowing you to benchmark any supported model instantly. This removes a major barrier to entry and lets you focus on analysis, not administrative setup.

Use Cases

Agenta

Accelerating Agent Development

Teams building complex AI agents with multi-step reasoning can use Agenta to experiment with different reasoning chains, evaluate each intermediate step for accuracy, and debug logic failures in the trace. This transforms a black-box process into a transparent, iterative one, significantly reducing time-to-market for reliable agentic applications.

Centralizing Enterprise Prompt Management

For organizations where prompts are scattered across emails, Slack, and documents, Agenta serves as the single source of truth. It allows centralized version control, structured A/B testing of prompt variations, and controlled rollouts, ensuring consistency, governance, and optimal performance across all LLM-powered features.

Implementing Rigorous QA for LLM Features

Product and QA teams can establish a robust validation pipeline using Agenta. They can create persistent test sets from real user interactions, run automated evaluations against every new prompt or model version, and integrate human-in-the-loop reviews from domain experts to catch nuanced failures before they reach production.

Streamlining Cross-Functional AI Projects

When projects require input from developers, product managers, and subject matter experts, Agenta's collaborative environment is essential. It enables non-coders to safely tweak prompts and run evaluations, while developers manage the infrastructure, all working from the same platform with shared visibility, eliminating miscommunication and accelerating iteration.

OpenMark AI

Validating a Model Before Feature Shipment

A product team has built a new AI-powered summarization feature and needs to choose the final model. They use OpenMark AI to benchmark GPT-4, Claude 3, and Gemini against their actual user prompts. By comparing real cost, speed, and consistency of summary quality, they confidently select the optimal model that balances performance with budget, ensuring a successful launch.

Cost-Efficiency Analysis for Scaling Applications

A developer building a high-volume customer support agent needs to optimize long-term costs. They benchmark several high-quality and mid-tier models on their ticket classification task. OpenMark AI reveals that while a premium model is slightly more accurate, a specific mid-tier model offers 95% of the quality at 40% of the cost, providing a clear, data-backed rationale for a more sustainable scaling strategy.

Ensuring Output Consistency for Critical Workflows

A company uses AI to extract structured data from legal documents, where inconsistency is unacceptable. They run their extraction prompts through OpenMark AI with multiple repeat runs. The results show that while some models have high peak scores, their variance is too great. They choose the model with excellent, stable consistency, guaranteeing reliable performance in every real-world execution.

Rapid Prototyping and Model Selection for New Projects

A startup is exploring AI capabilities for a new research assistant tool. Instead of spending weeks integrating and testing different APIs, they use OpenMark AI to quickly describe various Q&A and synthesis tasks. In minutes, they get a ranked shortlist of the top-performing models for their domain, accelerating their prototyping phase and directing their development efforts with confidence.

Overview

About Agenta

Agenta is the transformative, open-source LLMOps platform designed to empower AI teams to build and ship reliable, high-performance LLM applications with confidence. It directly addresses the core chaos of modern AI development, where unpredictable models meet scattered workflows, siloed teams, and a lack of validation. Agenta provides the single source of truth your entire team needs, from developers and engineers to product managers and domain experts. It centralizes the entire LLM development lifecycle into one cohesive platform, enabling structured collaboration and replacing guesswork with evidence. The core value proposition is clear: move from fragmented, risky processes to a unified workflow where you can experiment intelligently, evaluate systematically, and observe everything in production. This empowers teams to iterate faster, validate every change, and debug issues precisely, ultimately transforming how reliable AI products are built and scaled. By integrating prompt management, evaluation, and observability, Agenta is the essential infrastructure for any team committed to shipping trustworthy AI.

About OpenMark AI

Stop playing a guessing game with AI models. OpenMark AI is your definitive platform for task-level LLM benchmarking, transforming uncertainty into data-driven confidence. It's a powerful web application built for developers and product teams who need to make critical, pre-deployment decisions about which AI model to ship with their feature. Simply describe the task you want to test in plain language—be it classification, translation, data extraction, RAG, or any other workflow. OpenMark AI then runs your exact prompts against a vast catalog of 100+ models in a single, unified session. You get side-by-side comparisons of real-world performance metrics: cost per request, latency, scored output quality, and crucially, stability across repeat runs. This means you see the variance and reliability of a model, not just a single lucky output. By using a hosted credit system, it eliminates the tedious setup of configuring separate API keys for OpenAI, Anthropic, Google, and others for every comparison. Move beyond marketing datasheets and discover the true cost efficiency—the best quality relative to what you actually pay. OpenMark AI empowers you to ship AI features that are not only powerful but also predictable, affordable, and perfectly suited to your unique needs.

Frequently Asked Questions

Agenta FAQ

Is Agenta really open-source?

Yes, Agenta is a fully open-source platform. You can dive into the code on GitHub, contribute to the project, and self-host the entire platform. This ensures transparency, avoids vendor lock-in, and allows for deep customization to fit your specific infrastructure and workflow needs.

How does Agenta integrate with existing frameworks?

Agenta is designed for seamless integration. It works with popular LLM frameworks like LangChain and LlamaIndex, and is model-agnostic, supporting APIs from OpenAI, Anthropic, Cohere, and open-source models. You can integrate it into your existing stack without a major overhaul.

Can non-technical team members use Agenta effectively?

Absolutely. A core design principle of Agenta is to empower the entire team. It provides an intuitive web UI that allows product managers and domain experts to edit prompts, run experiments, and evaluate results without writing any code, bridging the gap between technical development and business expertise.

How does Agenta help with debugging in production?

Agenta provides full observability by tracing every LLM call and user request. When an error occurs, you can examine the complete trace to see the exact input, model calls, intermediate steps, and final output. You can annotate these traces, share them with your team, and instantly convert any problematic trace into a test case for future validation.

OpenMark AI FAQ

How is OpenMark AI different from standard benchmark leaderboards?

Standard leaderboards use fixed, general-purpose datasets (like MMLU) that may not reflect your specific use case. OpenMark AI is built for your tasks. You provide the exact prompts and criteria, and we run real API calls, giving you metrics on cost, latency, and consistency for your unique workflow. We show you what will work in practice, not just in theory.

What does "stability across repeat runs" mean and why is it important?

It means we run your task multiple times with the same model to see if the output quality and behavior are consistent. A model that gets a perfect score once but fails the next three times is a high-risk choice for production. We show you the variance, so you can select a model that delivers reliable, predictable results every time for your users.

Do I need to bring my own API keys for the models?

No, that's the key convenience! OpenMark AI operates on a credit system. You purchase credits and use them to run benchmarks across our entire catalog of 100+ models. We manage all the provider integrations (OpenAI, Anthropic, Google, etc.) on the backend, so you never have to configure, rotate, or secure a single external API key.

What kind of tasks can I benchmark with OpenMark AI?

You can benchmark virtually any task you would use an LLM for. This includes text classification, translation, creative writing, data extraction and structuring, question answering, code generation, agentic reasoning, RAG system evaluation, image analysis (for multimodal models), and much more. If you can describe it, you can benchmark it.

Alternatives

Agenta Alternatives

Agenta is a transformative, open-source LLMOps platform designed to empower teams to build and ship reliable AI applications. It belongs to the development category, specifically addressing the modern challenges of managing the entire LLM lifecycle from experimentation to production. Teams often explore alternatives for various reasons. These can include specific budget constraints, the need for different feature sets, or a requirement to integrate with an existing proprietary platform or cloud ecosystem. Every team's journey to building robust AI is unique, and finding the right tooling fit is a crucial step. When evaluating any platform, focus on what will truly unlock your team's potential. Look for solutions that foster collaboration, provide rigorous evaluation to replace guesswork, and offer the flexibility to adapt to your evolving needs without locking you into a single vendor or workflow.

OpenMark AI Alternatives

OpenMark AI is a developer tool for task-level benchmarking of large language models. It helps teams make data-driven decisions by running real prompts against a vast catalog of LLMs, comparing cost, speed, quality, and stability in a single browser session. Developers often explore alternatives for various reasons. They might need a different pricing structure, require deeper integration with a specific cloud platform, or seek more specialized testing features like automated regression suites. The ideal tool varies based on a team's unique workflow and deployment stage. When evaluating other solutions, focus on what matters for your project. Look for genuine, real-time API testing, not cached benchmarks. Prioritize tools that measure consistency and variance, not just a single output. Finally, ensure the platform provides actionable metrics that directly tie model performance to your specific use case and budget.