Hostim.dev vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

Hostim.dev

Easily deploy your full app stack on EU servers with built-in databases for fast, secure, and predictable hosting.

Last updated: March 1, 2026

Stop guessing and find the perfect AI model for your task by instantly benchmarking over 100 options for cost, speed, and quality.

Last updated: March 26, 2026

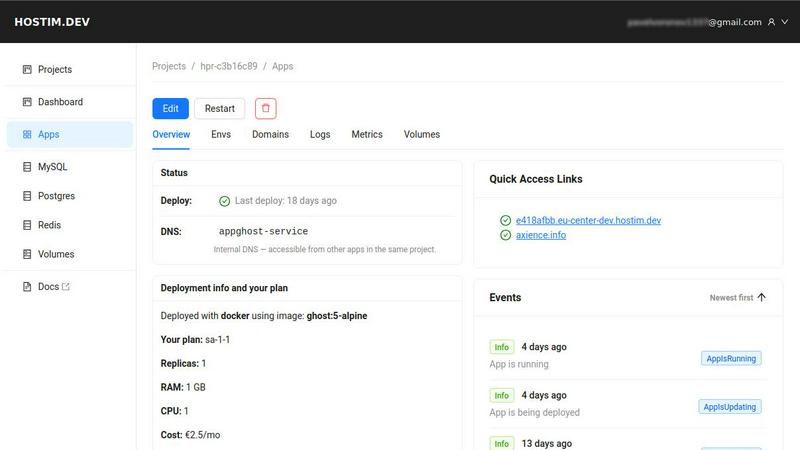

Visual Comparison

Hostim.dev

OpenMark AI

Feature Comparison

Hostim.dev

Simple Deployment

Deploying applications has never been easier. With Hostim.dev, you can launch your applications using Docker, Git, or Docker Compose, eliminating the need for extensive DevOps knowledge. Just paste your Compose file, and you can go live in minutes.

Built-in Databases and Storage

Hostim.dev automatically provisions essential databases and storage solutions such as MySQL, PostgreSQL, and Redis. Everything is pre-wired, allowing you to focus on the application itself rather than the underlying infrastructure.

Scalability at Your Fingertips

Easily scale your resources directly from the user interface without any downtime. Whether you need to adjust CPU or RAM, Hostim.dev provides the flexibility to adapt to your project's needs in real-time.

Secure Environments by Default

Security is paramount with Hostim.dev. Your applications benefit from automatic HTTPS, live logs, and monitoring, ensuring that each project runs in a fully isolated environment designed to keep your data secure and private.

OpenMark AI

Plain Language Task Description

Ditch complex configurations and scripting. With OpenMark AI, you simply describe the task you need to benchmark in everyday language. The platform intelligently interprets your goal, whether it's "extract dates and names from customer emails" or "generate three creative taglines for a new product." This intuitive approach puts the focus on your objective, not on engineering a test harness, making advanced benchmarking accessible to everyone on your team.

Multi-Model Comparison in One Session

Break free from running isolated, manual tests. This core feature allows you to execute the same set of prompts against dozens of leading AI models simultaneously within a single benchmarking session. You get an immediate, side-by-side results dashboard that contrasts performance across all contenders, saving you immense time and providing a clear, comparative view that isolated tests can never offer.

Real-World Performance Metrics

Go beyond theoretical benchmarks. OpenMark AI makes real API calls to each model, providing metrics that matter for production: actual cost per request, true latency, and scored output quality for your specific task. Most importantly, it runs multiple repetitions to show stability and variance, revealing if a model is consistently good or just occasionally lucky. This is the data you need to trust a model before it goes live.

Hosted Credits (No API Key Management)

Simplify your workflow dramatically. Instead of managing and securing a multitude of API keys from different providers like OpenAI, Anthropic, and Google, you simply use OpenMark AI credits. The platform handles all the backend connections, allowing you to benchmark any supported model instantly. This removes a major barrier to entry and lets you focus on analysis, not administrative setup.

Use Cases

Hostim.dev

Freelancers Delivering Projects

Freelancers can leverage Hostim.dev to quickly deploy applications for clients. The per-project billing feature allows for clear cost tracking, making it easy to hand over projects without any hidden fees or surprises.

Agencies Managing Client Projects

Agencies can efficiently manage multiple client projects by isolating each one in its own secure environment. This separation not only enhances security but also provides a clear overview of costs, helping to control budgets effectively.

Students Learning Real-World Skills

Students can use Hostim.dev to gain hands-on experience with real infrastructure. With a free trial and access to essential tools, they can deploy projects that serve as valuable portfolio pieces, showcasing their skills to potential employers.

Startups Building Scalable Applications

Startups can utilize Hostim.dev to launch their applications quickly and with minimal overhead. With built-in databases and the ability to scale resources as needed, they can focus on product development and growth without worrying about infrastructure complexities.

OpenMark AI

Validating a Model Before Feature Shipment

A product team has built a new AI-powered summarization feature and needs to choose the final model. They use OpenMark AI to benchmark GPT-4, Claude 3, and Gemini against their actual user prompts. By comparing real cost, speed, and consistency of summary quality, they confidently select the optimal model that balances performance with budget, ensuring a successful launch.

Cost-Efficiency Analysis for Scaling Applications

A developer building a high-volume customer support agent needs to optimize long-term costs. They benchmark several high-quality and mid-tier models on their ticket classification task. OpenMark AI reveals that while a premium model is slightly more accurate, a specific mid-tier model offers 95% of the quality at 40% of the cost, providing a clear, data-backed rationale for a more sustainable scaling strategy.

Ensuring Output Consistency for Critical Workflows

A company uses AI to extract structured data from legal documents, where inconsistency is unacceptable. They run their extraction prompts through OpenMark AI with multiple repeat runs. The results show that while some models have high peak scores, their variance is too great. They choose the model with excellent, stable consistency, guaranteeing reliable performance in every real-world execution.

Rapid Prototyping and Model Selection for New Projects

A startup is exploring AI capabilities for a new research assistant tool. Instead of spending weeks integrating and testing different APIs, they use OpenMark AI to quickly describe various Q&A and synthesis tasks. In minutes, they get a ranked shortlist of the top-performing models for their domain, accelerating their prototyping phase and directing their development efforts with confidence.

Overview

About Hostim.dev

Hostim.dev is an innovative bare-metal Platform-as-a-Service (PaaS) that empowers developers to streamline the backend deployment process. Designed for those who thrive on speed and efficiency, Hostim.dev transforms the complex infrastructure landscape into a straightforward, powerful experience. Imagine deploying your entire containerized application stack within minutes, complete with databases, caching, and storage automatically configured and ready to go. By eliminating the burdensome DevOps overhead, it allows you to focus on what matters most—building exceptional software. Each project operates in its own secure, isolated environment on high-performance hardware located in Germany, ensuring GDPR compliance by default. With transparent hourly billing per project and a commitment to no surprise costs, Hostim.dev is perfect for freelancers, startups, agencies, and SaaS builders who seek to deliver remarkable applications without the hassle of traditional hosting solutions. Your journey from local development to a fully scalable application has never been more empowering.

About OpenMark AI

Stop playing a guessing game with AI models. OpenMark AI is your definitive platform for task-level LLM benchmarking, transforming uncertainty into data-driven confidence. It's a powerful web application built for developers and product teams who need to make critical, pre-deployment decisions about which AI model to ship with their feature. Simply describe the task you want to test in plain language—be it classification, translation, data extraction, RAG, or any other workflow. OpenMark AI then runs your exact prompts against a vast catalog of 100+ models in a single, unified session. You get side-by-side comparisons of real-world performance metrics: cost per request, latency, scored output quality, and crucially, stability across repeat runs. This means you see the variance and reliability of a model, not just a single lucky output. By using a hosted credit system, it eliminates the tedious setup of configuring separate API keys for OpenAI, Anthropic, Google, and others for every comparison. Move beyond marketing datasheets and discover the true cost efficiency—the best quality relative to what you actually pay. OpenMark AI empowers you to ship AI features that are not only powerful but also predictable, affordable, and perfectly suited to your unique needs.

Frequently Asked Questions

Hostim.dev FAQ

What does the free tier include?

The free tier provides a 5-day trial that allows users to explore Hostim.dev's features without any signup requirements. This includes access to Docker hosting and managed databases.

Can I deploy with just a Compose file?

Absolutely! Hostim.dev allows you to deploy your applications using just a Compose file. This simplifies the deployment process significantly, making it accessible for developers of all skill levels.

Where is my app hosted?

Your applications are hosted on high-performance bare-metal servers located in Germany. This ensures optimal performance and compliance with GDPR regulations by default.

Do I need to know Kubernetes?

No, you do not need to know Kubernetes to use Hostim.dev. It simplifies deployment processes, allowing you to focus on your application without the complexities of Kubernetes management.

OpenMark AI FAQ

How is OpenMark AI different from standard benchmark leaderboards?

Standard leaderboards use fixed, general-purpose datasets (like MMLU) that may not reflect your specific use case. OpenMark AI is built for your tasks. You provide the exact prompts and criteria, and we run real API calls, giving you metrics on cost, latency, and consistency for your unique workflow. We show you what will work in practice, not just in theory.

What does "stability across repeat runs" mean and why is it important?

It means we run your task multiple times with the same model to see if the output quality and behavior are consistent. A model that gets a perfect score once but fails the next three times is a high-risk choice for production. We show you the variance, so you can select a model that delivers reliable, predictable results every time for your users.

Do I need to bring my own API keys for the models?

No, that's the key convenience! OpenMark AI operates on a credit system. You purchase credits and use them to run benchmarks across our entire catalog of 100+ models. We manage all the provider integrations (OpenAI, Anthropic, Google, etc.) on the backend, so you never have to configure, rotate, or secure a single external API key.

What kind of tasks can I benchmark with OpenMark AI?

You can benchmark virtually any task you would use an LLM for. This includes text classification, translation, creative writing, data extraction and structuring, question answering, code generation, agentic reasoning, RAG system evaluation, image analysis (for multimodal models), and much more. If you can describe it, you can benchmark it.

Alternatives

Hostim.dev Alternatives

Hostim.dev is a robust Platform-as-a-Service (PaaS) designed to simplify the deployment of full application stacks on EU servers. By seamlessly integrating databases and essential services, it empowers developers to focus on building remarkable software rather than grappling with complex infrastructure. Its unique features cater to a variety of users, from freelancers to startups, who seek an efficient and transparent deployment experience. Users often explore alternatives to Hostim.dev for various reasons, such as pricing structures, feature sets, or specific platform requirements that better align with their unique needs. When searching for an alternative, it’s crucial to consider the ease of deployment, the range of managed services offered, the platform's security compliance, and the overall billing transparency. These factors will ensure a smooth transition and continued focus on innovation without unnecessary distractions.

OpenMark AI Alternatives

OpenMark AI is a developer tool for task-level benchmarking of large language models. It helps teams make data-driven decisions by running real prompts against a vast catalog of LLMs, comparing cost, speed, quality, and stability in a single browser session. Developers often explore alternatives for various reasons. They might need a different pricing structure, require deeper integration with a specific cloud platform, or seek more specialized testing features like automated regression suites. The ideal tool varies based on a team's unique workflow and deployment stage. When evaluating other solutions, focus on what matters for your project. Look for genuine, real-time API testing, not cached benchmarks. Prioritize tools that measure consistency and variance, not just a single output. Finally, ensure the platform provides actionable metrics that directly tie model performance to your specific use case and budget.